Complaints About Misuse Of AI By Hawaiʻi Lawyers Growing

作者:Stewart Yerton

Hawaiʻi is seeing an increase in complaints against lawyers accused of improperly using artificial intelligence programs to help produce documents, but the state court system has yet to take decisive action to address the problem.

A lawyer with one of the state’s oldest and most prestigious law firms recently made a startling confession: After opposing counsel pointed out “a disturbing number of fabricated and misrepresented” case citations in the lawyer’s brief filed with a Maui Circuit Court, the lawyer admitted he had used an artificial intelligence program to research and draft the document.

Honolulu lawyer Kaʻōnohiokalā Aukai IV asked Judge Kelsey Kawano to disregard all six of the brief’s cited cases. Two of the cases appear to be completely fabricated “AI hallucinations.”

“I sincerely regret the oversight,” wrote Aukai, an associate with Case Lombardi, in a declaration. He told the court he intends to confirm the accuracy of case citations he submits to the courts “going forward.”

In the end, the error amounted to nothing. Kawano ruled for Aukai anyway and didn’t impose sanctions, which are allowed by Hawaiʻi Rules of Civil Procedure, for submitting erroneous citations to the court. Aukai did not respond to a call for comment.

The opposing lawyer, Michael Carroll, commended Aukai for correcting the record before Kawano ruled.

“They did the right thing,” he said.

Still, the flap, which has become the subject of water-cooler talk in Honolulu legal circles, underscores a critical issue Hawaiʻi judges and lawyers are grappling with: How to use a tool with the power to exponentially enhance productivity when that tool also is widely known to make monumental errors.

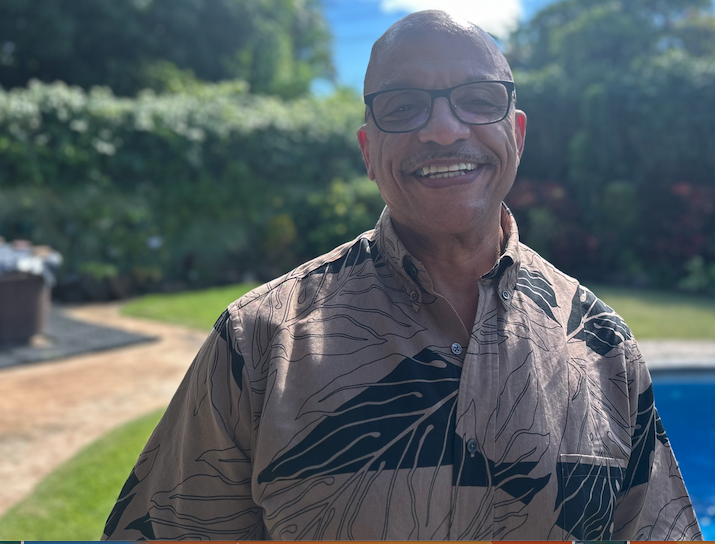

At stake is the credibility of the courts and lawyers at the heart of the legal system. It’s a small but growing problem in Hawaiʻi, says Ray Kong, chief disciplinary counsel for the state office in charge of enforcing rules governing lawyers.

While Hawaiʻi’s federal courts have drawn a hard line, the state judiciary is studying the issue, with a final report due in December.

‘You Cannot Rely On AI. It’s A Disaster’

In a recent case showing the consequences of AI errors, a Georgia judge ruled against a wife in a divorce case based on fake law. The Georgia Court of Appeals on June 30 cited the fake law, which it presumed was generated by AI, when it overturned the lower court’s order. It also dinged the husband’s lawyer with a $2,500 sanction, the most allowed in that instance.

The appellate court said that lawyers using fake, AI-generated citations “promotes cynicism about the profession and American legal system.”

Hawaiʻi federal courts have issued a clear order: Lawyers must tell the court when they use AI to produce any court document and verify that they’ve confirmed material in the document “is not fictitious.” Lawyers who submit fictitious material face sanctions under Federal Rules of Civil Procedure, the order indicates.

But Hawaiʻi state courts, which handle the vast majority of cases here, are still figuring out what to do.

Chief Justice Mark Recktenwald established a Committee on Artificial Intelligence and the Courts in April 2024 chaired by Supreme Court Justice Vladimir Devens and First Circuit Court Judge John Tonaki, to study the issue and prepare a final report in December.

An interim report that was due in December 2024 is still a “work in progress,” Brooks Baehr, a spokesman for the judiciary, said in an email. Asked how a report the chief justice ordered to be completed in December 2024 could be a “work in progress,” Baehr said the report is “a working document — and not available for release.”

Judges are dealing with AI hallucinations case by case, and the judiciary can’t say how many they’ve encountered, Baehr said in an email.

Baehr declined an interview request.

The chief justice has issued initial guidance on the use of AI by lawyers. It points to existing guardrails like ethics rules requiring candor to the courts. Attorneys who make false statements to the courts can also face sanctions under the Hawaiʻi Rules of Civil Procedure.

“These obligations remain unchanged or unaffected by AI’s avalability [sic] and are currently broad enough to govern its use in the practice of law,” the chief justice wrote.

But, as the Case Lombardi incident shows, sanctions are hardly a given, even when a document submitted to the court is based almost entirely on erroneous or fake law.

“People are trusting it a little too freely, especially young lawyers.”

Nancy Rapoport, University of Nevada-Las Vegas’ William S. Boyd School of Law

Some local firms have created internal rules for using AI in their offices, particularly for researching and drafting memos to clients and documents submitted to courts.

“You shouldn’t use these things — period — in any sort of professional work,” said Paul Alston, a partner in the Honolulu office of Dentons, the world’s largest law firm. “You cannot rely on AI. It’s a disaster.”

National experts agree.

“People are trusting it a little too freely, especially young lawyers,” said Nancy Rapoport, a law professor with the University of Nevada Las Vegas’ William S. Boyd School of Law, who has studied the use of AI in legal practice.

Even when programs don’t make up cases entirely, they can misstate what real cases say “in pretty spectacular fashion,” she said.

AI Can Increase Efficiency

The flip side is AI also can speed up work done by lawyers in pretty spectacular fashion, said Mark M. Murakami, the president of the Hawaiʻi State Bar Association, who also serves on Recktenwald’s AI committee.

Reducing the work lawyers have to spend on some tasks can free them to serve more clients, he said, a point Recktenwald made in his guidance to lawyers. And not all the tasks are susceptible to the “AI hallucinations” that can plague court pleadings and client memos.

For instance, Murakami said, he used AI to prepare for questioning a trial witness. He had the program analyze a transcript and help craft questions — reducing a task that could have taken an hour to a mere eight minutes.

But lawyers in the U.S. and abroad are increasingly using AI for more than such internal tasks, said Damien Charlotin, a French researcher tracking the issue. Charlotin has created an online database of legal decisions in which courts said lawyers filed documents that included “hallucinated content —typically fake citations, but also other types of arguments.”

He’s found more than 230 cases so far globally, including 141 in the U.S., but acknowledges there are probably more; he generally tracks only opinions, not the wider universe of fake citations used in all court filings.

Unlike Hawaiʻi’s Kawano, some judges have imposed sanctions, referred matters to disciplinary boards or both. A federal court in California, for instance, levied $31,100 in sanctions against two firms that used AI tools to create a brief containing a half dozen hallucinated citations.

But such sanctions are rare, Charlotin, a senior research fellow with HEC Paris business school, said in an email.

“Surprisingly enough, I think there has been a hefty dose of leniency so far,” Charlotin said.

When sanctions are imposed, he said, it has typically been “in the (many, and that was surprising to me) cases where the party refused to own up to it, doubled down, lied, or blamed the intern.”

Hawaiʻi appears to be no exception. There’s only one Hawaiʻi case in which a lawyer was sanctioned for using a single fake case citation, possibly generated by AI. The lawyer owned up to the mistake, apologized and agreed to be sanctioned, even though the applicable rule of civil procedure didn’t allow sanctions at that point in the proceeding. He was fined $100.

‘Corrosive To The Reputation Of The Judicial System’

Some say the leniency needs to stop.

“The courts need to take aggressive steps to stop people from doing this,” said Paul Alston, the Dentons partner. “It’s so corrosive to the reputation of the judicial system. The consequences of doing it need to be severe.”

Ken Lawson, who teaches professional responsibility at the University of Hawaiʻi’s William S. Richardson School of Law, agrees.

In addition to violating rules of civil procedure, submitting fake, AI-generated cases in briefs raises numerous issues concerning the Hawaiʻi Rules of Professional Conduct, which are administered by the Hawaiʻi Office of Disciplinary Counsel, Lawson said.

“There are so many ethical violations involved because it means that you never read the case — because the case doesn’t exist,” Lawson said.

Another rule involves fees.

“How much did you charge your client for this motion that had no accurate law in it, not a single case?” Lawson said.

The purpose of the ODC is to investigate such questions, he said.

“The more the courts become aware of some of these issues, I would expect some of the judges will start referring cases to disciplinary counsel,” he said.

So far, the ODC has received a handful of complaints about lawyers improperly using AI, said Ray Kong, the office’s chief disciplinary counsel.

“I wouldn’t say it’s widespread,” he said. “But it’s happening more and more.”

“Even if it’s unintentional, you’re still misrepresenting a case.”

Ray Kong, Hawaiʻi Office Of Disciplinary Counsel

The complaints generally involve fabricated case citations or ones in which the case is real but doesn’t say what the lawyer claimed, Kong said.

“Even if it’s unintentional, you’re still misrepresenting a case,” he said.

Finally, Lawson and Alston said, there’s the question of supervision by senior lawyers. While Aukai took the blame for the error, Lawson and Alston said the senior partner on the case, Michael Lam, bore ultimate responsibility.

If Lam had properly reviewed the brief, they said, he would have caught the errors before the brief was submitted to the court with his name on top.

“Partners have an ethical responsibility to properly supervise,” Alston said. “It’s sloppy from start to finish.”

Lam declined to comment, saying the court record speaks for itself.