全部新闻

AI, politics and the battle against misinformation The discussion围绕社交媒体和人工智能在选举中的作用,以及它们如何影响投票率和选举结果。以下是对话的主要内容总结: 1. **AI技术与虚假信息**:专家指出,虽然目前的AI技术尚未达到预期的欺骗效果,但随着技术的进步,未来可能会对选民产生更深远的影响。 2. **平台责任**: - 平台所有者需要建立明确的安全措施以防止生成不准确的信息,特别是在投票时间、地点和候选人资格等关键信息方面。 - 提高算法优先级机制的透明度,并确保公司有专业的信任与安全团队来监控潜在有害信息。 3. **选举系统的挑战**:除了技术因素外,还有许多其他系统性问题影响选民参与率,例如海外投票障碍。假新闻可以利用这些漏洞进一步损害民众的信心和积极性。 4. **算法的负面影响**: - 现有的社交媒体推荐机制往往倾向于展示负面、仇恨性的内容以吸引用户互动。 - 政治竞选活动可以通过这种情绪化的传播手段来攻击对手,而不必承受自身的投票率下降的风险。这使得通过煽动恐惧和不满来进行选举策略变得更加容易。 5. **解决方案**: - 增强平台对假信息的响应速度与能力。 - 考虑到监管问题复杂性,建议先从提高算法透明度做起,并为用户提供不同信息源的选择权,而非强制执行统一标准。 该对话强调了技术如何被用于操纵公众舆论,在此背景下提出了加强监管、提升公众认知以及优化社交平台机制等多种对策来保护民主过程的公正性和完整性。 The Financial Times held a Future of AI summit in London on November 6-7. Below we publish an edited transcript of a conversation about AI, politics and the battle against misinformation between Javier Espinoza, the FTâs EU correspondent covering competition and digital policy, and Elizabeth Dubois, professor at the Centre for Law, Technology and Society at the University of Ottawa. Elizabeth Dubois: I research the political uses of new technology, so that includes AI, but also social media and search engines and all kinds of other communication technologies that have been infiltrating our electoral systems. And so, recently, I wrote a report looking at AI uses in Canadian politics. But, as youâve mentioned, Iâve obviously been following the US election to see whatâs on the cusp â and what we can expect in others coming forward. Javier Espinoza: And itâs the perfect timing, I think, for our conversation. Today, we have fresh news and, apart from Trump being re-electedâ.â.â.âElon Musk has emerged, in my view, as the other big personality, character, player that has influence. I donât know to what degree, but he has been a player in this election like we have not seen before â also, with X [formerly Twitter] as a platform to help. I donât know how many of you are on X, I know that the numbers are dwindling, but the algorithm in my X profile is as if Iâm a Republican. What are your thoughts on this? ED: Itâs a really interesting example because people with a lot of money have had a lot of influence in US politics for a long time. Thatâs not new. And social media companies being this controller of information, they decide what to incentiviseâ.â.â.âwhat to prioritise in your feed. Thatâs also not new. But the combination of those things has really played out in a way that I donât think we were fully prepared for or fully expected. You mentioned your feed looks like you are a Republican. And we know that thatâs the case in a lot of peopleâs feeds â even though there are roughly equal numbers of Democrats and Republicans that weâre reporting through surveys to be using those tools. So itâs really interesting to see the power of those algorithms. It really shows that our information environment is controlled by these systems, and sometimes by particular people when they take over a large company, for example. JE: And do you think weâre just learning about the effects that X and Musk have had on this election? Do you think that itâs about the number of people that he managed to reach through the platform, or is it about mobilising the ones that are already converted? What are your thoughts onâ.â.â.âthe use of this platform and also misinformation? ED: Yeah, I think that X is an example of the larger kind of misinformation conversation, where a lot of the most effective misinformation and disinformation right now is actually about mobilising particular communities and convincing people in specific groups to believe one thing or another. Itâs not, at this point, as much about creating a mass misunderstanding of reality, but rather getting certain people who are highly active, who have a loud voice, to be sharing this information and resharing it over and over. Elon Musk joins Donald Trump on stage at a Pennsylvania rally in October. The president-elect has since offered the owner of X a role in government © Jim Watson/AFP via Getty Images JE: I was having a discussion just earlier this morning with someone from the UK regulatory part of the government who was saying that, in their research, they have noticed that people might be seeking out exposure to deep fakes or to misinformation on purpose and excluding the verifiableâ.â.â.âhighly sophisticated information that the Financial Times and other media outlets are producing every day. Have you picked this up in your research? ED: Yeah. So one of the things about disinformation research is we really think everyoneâs going to want true content, right? If we just have enough high quality content, itâll be fine. But the reality is people like to be entertained. People like to feel community. People like to have their ideas supported and reinforced. So thereâs a bunch of reasons that people are going to intentionally choose or just not kind of question disinformation. JE: And I guess this sort of amplifies the use and the efficiency that weâre talking about in terms of X. But, moving beyond X and the elections today, can youâ.â.â.âflesh out some of the ways that youâve identified people, agents or countries are using misinformation, and playing with algorithms? ED: Yeah. So, when weâre thinking about AI and disinformation, the immediate idea is âOh, itâs the deepfakesâ. And, absolutely, deepfakes are happening. We have seen examples, even in this US election. But AI use goes beyond that. The thing that weâve seen emerging is people making use of generative AI tools like ChatGPT as a search engine, and we know that those tools often hallucinate, often produce information that has inaccuracies in it or that lacks contextual information. So you end up with often âtrueâ misinformation. There isnât an intent to harm necessarily, but nevertheless, people are being sent to polling stations on the wrong day, as an example. JE: Wow. Thatâs quite shocking to hear. In your research, what counts as AI in elections? Give us a little bit moreâ.â.â.â ED: Thereâs a lot of political softwares that embed AI technologies into their systems to help a campaign better target, or better profile, potential voters â and people to not pay attention to. We also have examples of AI being used for translation or creating robocalls to make it easier to reach greater [numbers of] people, which could be good â we could see that is a very helpful democratic thing if youâre engaging communities that speak particular languages that maybe no candidates speak. But it can also be very deceptive and confusing for people. And, then, we have this whole group of conversational agents or spoken bots that weâre starting to see emerge. In the last Mexican presidential election, for example, there was a presidential candidate who created an AI-powered âspokesbotâ to literally be a spokesperson for her and her campaign. And that really changes the landscape of information. JE: How effective was that? Was the candidate elected as a result? ED: The candidate was not elected. With all of these kinds of tools, itâs going to be really hard to say that AI was the thing that gets someone elected or doesnât get someone elected. There are so many different versions of these kinds of tools, and theyâre embedded into really complex campaign structures. JE: But what we do know is it is changing the way that we can interact with political candidates. Do you think - and I know that we have to do the research but letâs speculate a little bit ... so we can ... at least think about these things ... I mean, weâre talking about AI now but ... the Brexit vote arguably was also influenced by social media, and the outcome. Do you think thatâ.â.â. we are seeing outcomes in elections that we wouldnât have, if we didnât have these new emerging technologies or ways of disseminating misinformation? A 2018 âLeave Means Leaveâ rally. Anti-Brexit campaigners have claimed that misinformation on social media affected the vote © Daniel Leal/AFP via Getty Images ED: Thereâs absolutely no denying the fact that technology impacts elections and the way campaigns are run. Itâs really hard to tease apart what is the main thing that changed the result of an election. But it is very clear that the way these new technologies are being integrated into campaigns changes how campaigns run. It changes how journalists report on campaigns, and it changes how the public interacts with the information that comes out of those systems. So absolutely, weâre seeing impacts now. Does mis- or disinformation impact elections to the point where we canât trust the results or question the integrity? I think that, so far, what weâve seen, particularly in the recent US election, is that AI, in terms of its deceptive ability and the way itâs being used is not having the kind of impacts or the kind of disordering effects that we initially expected. But that doesnât mean that itâs not going to change as these tools evolve and are integrated into our daily lives. Audience question: What more do you think the platform owners themselves can do to combat misinformation and disinformation? ED: Iâll start first with AI and particularly generative AI tools. I think there needs to be very clear safeguards built into the system so that it is not able to hallucinate or offer inaccurate information or, contextualised information, particularly when itâs relating to how people can vote, and when they should vote, and whoâs running in their elections. Those are really essential pieces of information that will undermine the integrity of an election. Then we go to the larger question of mis- and disinformation being spread across all kinds of social media and search, and thatâs a much more tricky one. I think one option is to have increased transparency and clarity on how prioritisation and deprioritisation algorithms work. Letâs make sure there are trust and safety teams that these companies support and make use of, to make sure that when potentially harmful information is being spread across those platforms, theyâre actually responding to it. I also think that, at some point, there may need to be more substantial governmental regulation coming in, because we know that these platforms each make individual choices, and that the change in leadership in one of these organisations can drastically shift the information environment very quickly, which â in the context of an actual election campaign â could be really risky. Audience question: As weâve seen in the US election, in states like Georgia, we have seen genuine disruptionâ.â.â. people being discouraged to vote. And that has had a serious effect. Have you got any general comments about how we can go further to minimise and mitigate that? ED: In terms ofâ.â.â.âGeorgia, I would take it even broader and say, looking at the US election and potential impacts on peopleâs ability to vote, we know that it extends beyond the uses of AI. And there are electoral systems which make it difficult for people living abroad to get ballots in time, as an example. Thereâs a variety of things that I think come into play when weâre thinking about whether or not people were informed enough to get their vote cast. Thatâs not something thatâs brand new. But it is something thatâ.â.â.âmis- and disinformation can be used to exacerbate because information can travel so quickly. With AI in so many different formats online, it can be really hard to track what is true and know how to execute the next steps. Audience question: The algorithms in social media, they reward engagement, right? And, a lot of times, thereâs a bias in humans where they are more drawn to negative, hateful, provocative content. Therefore, the algorithms, like social networks, can just say: âOh, weâre just weâre just repeating what people want to seeâ. And so politicians that kind of exploit negativity and hate benefit from that. Do you think that the actual algorithms themselves need to be regulated to protect against that? ED: Itâs a great point. It makes me think about the research thatâs been done on attack ads. Political attack ads are known to be very effective at making people not trust or not want to vote for whoever is being attacked. But thereâs a rebound effect where whoever created the attack also takes a hit in the polls. So that has been a bit of a natural deterrent to using too much of that sort of negative campaigning. But what we see in online systems is that itâs a lot easier for political campaigns to distance themselves from those attacks and from those kinds of fear-based approaches. So you end up being able to undermine your opponent without necessarily taking the hit yourself. It can be exacerbated again by different kinds of generative AI tools, the social media algorithms that are amplifying it. So, should the algorithms themselves be regulated? I donât know that we necessarily want a situation where every platform has to comply with a particular kind of prioritisation, for both business reasons and kind of access to information reasons. But I do think we need a lot more transparency in how those work, and we need additional options so that people can choose different kinds of curators of their information. This transcript has been edited for brevity and clarity.

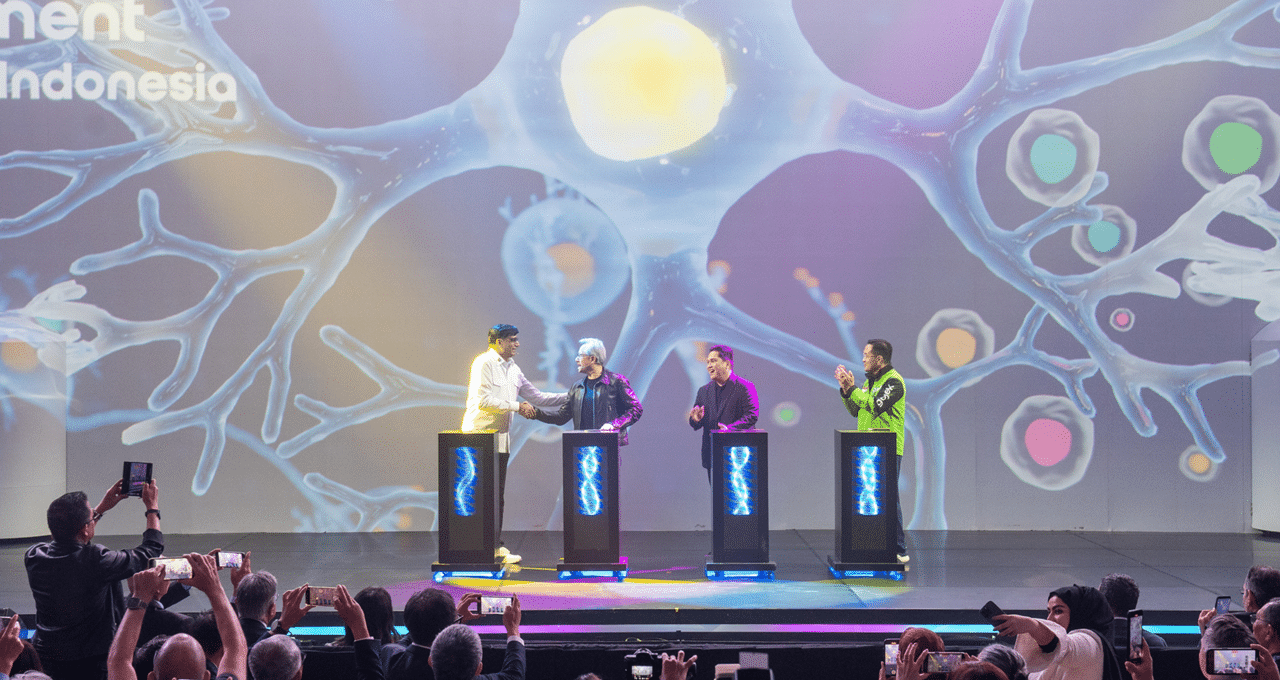

印度尼西亚技术领导者与 NVIDIA 及合作伙伴合作推出国家人工智能

印度尼西亚推出了 Sahabat-AI,这是一项与 NVIDIA 和当地合作伙伴合作的主权人工智能计划。该项目旨在提供开源的印尼语大语言模型(LLM),可供国内各个实体用来开发人工智能应用程序。该举措强调数字主权和包容性,包括由 Indosat Ooredoo Hutchison 开发的人工智能云基础设施,该基础设施正在加速金融和医疗保健等行业特定行业应用的部署。

人工智能人脸匿名器掩盖图像中的人类身份

研究人员开发了一种使用人工智能技术(特别是扩散模型)对图像中的面部进行匿名化的方法,该技术可以改变与身份相关的面部特征,同时保持背景和表情等其他元素完好无损。这项技术保留了照片的背景和目的,同时确保个人无法被识别。该方法被描述为比以前的方法更简单、更有效。

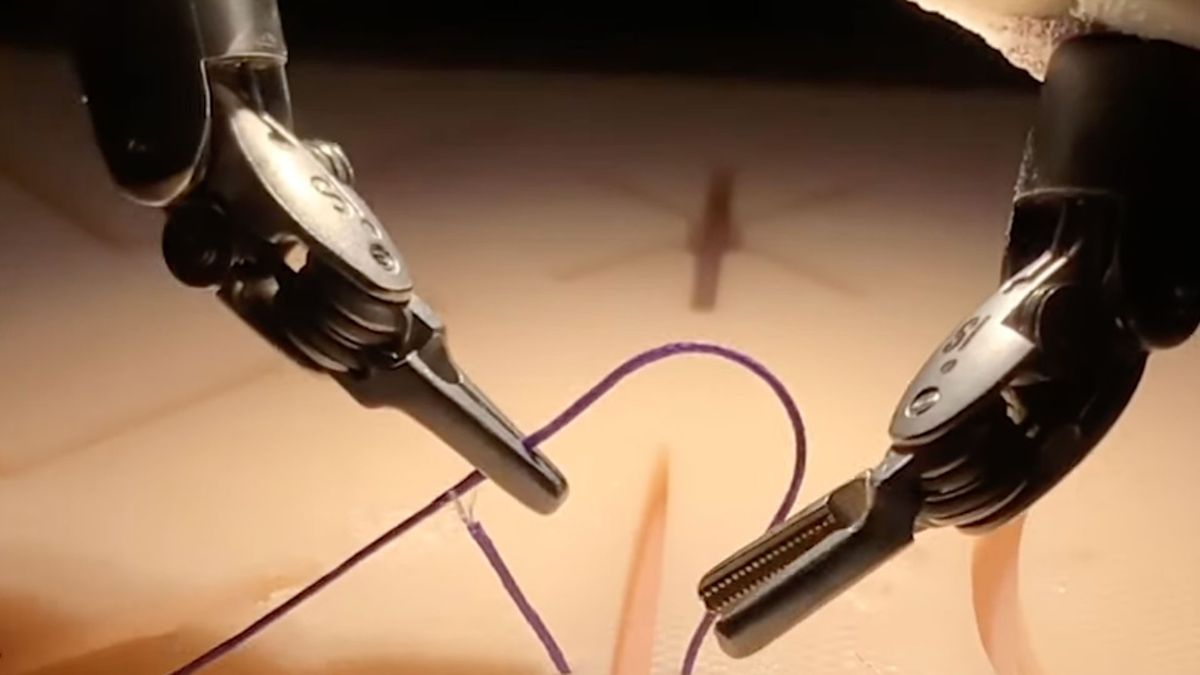

机器人AI在观看视频训练后成功完成手术

约翰·霍普金斯大学和斯坦福大学的研究人员开发了一种手术机器人,在观察人类执行任务后,可以像人类一样精确地执行手术。通过模仿学习,机器人从腕上摄像头录制的视频中学习了三种特定的手术技能——针操作、组织提升和缝合。该人工智能模型能够仅根据摄像头输入来预测手术所需的机器人运动,这表明医疗机器人技术向前迈出了重要一步。这项技术可以提高复杂手术的精确度并减少医疗错误,从而可能使人类外科医生有更多的时间来解决意外的并发症。

本·阿弗莱克赞扬 Skydance 与派拉蒙的交易:大卫·埃里森是所有者,而不是“管理阶层”

本·阿弗莱克 (Ben Affleck) 在 CNBC 小组讨论中讨论了各种话题,包括 Skydance 收购派拉蒙的可能性以及生成人工智能对电影业的影响。作为 Artists Equity 的联合创始人兼首席执行官,阿弗莱克指出,由于争夺消费者注意力的竞争加剧,创意人员面临着挑战,但他对大卫·埃里森 (David Ellison) 领导派拉蒙的努力表示乐观。他认为,像埃里森这样的所有者兼经理可以重振制片厂系统,该系统传统上由具有短期议程的高管阶层管理。关于人工智能,阿弗莱克承认它有降低成本和使电影制作民主化的潜力,但强调它不会取代电影制作中人类的创造力或判断力。本·阿弗莱克

OpenAI 希望在一月份推出其首款人工智能代理,名为“Operator” - SiliconANGLE

OpenAI 计划于 2024 年 1 月推出其首款人工智能代理,名为“Operator”。Operator 将执行网络浏览器任务,例如根据用户指令预订航班和编写软件。这一举措符合代理人工智能的趋势,即可以在最少的人工监督下完成多步骤任务的自主系统。Anthropic PBC 和谷歌等竞争对手也在开发类似的技术,这给 OpenAI 带来了追赶的压力。

人工智能和创造力:扩大界限,而不是取代它们——观点

在人工智能主导的时代,哈尔滨工业大学霍隆理工学院视觉传达系主任扎奇·迪纳尔探讨了人工智能如何增强而不是限制人类创造力。通过在泰国和欧洲举办的研讨会和讲座,他强调,虽然传统的创意过程需要大量的时间和精力,但人工智能支持的现代工具使这些过程更快、更容易实现,而且不会削弱其深度。Dinar 强调人工智能作为创意合作伙伴的潜力,扩大边界并在设计和创作方面实现以前无法实现或资源密集型的新可能性。他认为,对人工智能的战略投资可以推动就业创造和经济增长,同时促进视觉艺术以外各个领域的创新。

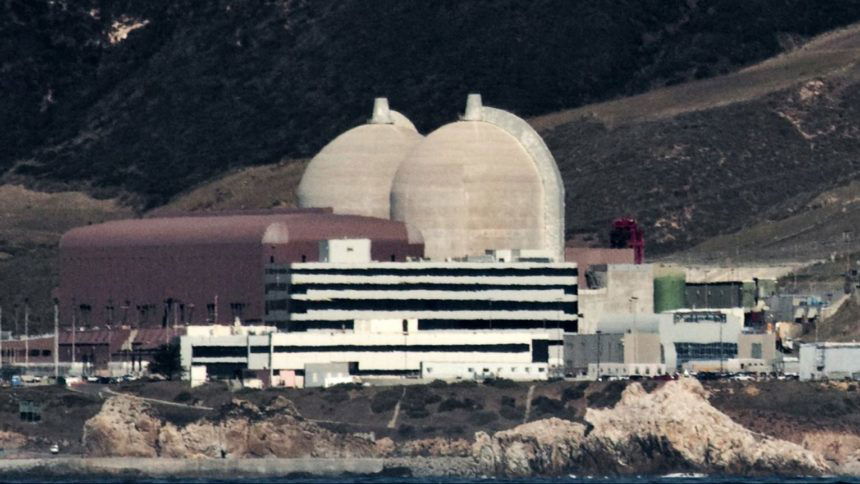

迪亚波罗峡谷发电厂部署新的人工智能搜索解决方案

太平洋天然气和电力公司 (PG&E) 宣布在 Diablo Canyon 发电厂部署 Atomic Canyon 的人工智能驱动的核数据管理系统,这标志着美国核电站首次商业安装现场生成人工智能。Neutron Enterprise 解决方案将促进美国核管理委员会 ADAMS 的快速文档搜索,预计将显着缩短搜索时间。PG&E 强调,这项创新支持 Diablo Canyon 高效、安全的运营,该峡谷生产加州 9% 的电力和 17% 的零碳能源。

OpenAI、澳大利亚和欧盟各自推出自己的人工智能法规 |PYMNTS.com

主要国家在人工智能监管问题上存在分歧,澳大利亚承诺严格监管,欧盟则推进其人工智能法案。银行和金融公司正在增加对人工智能技术的使用,在支持严格控制的国家和主张尽量减少干预的国家之间引发了冲突。欧盟委员会发起了一项磋商,旨在为欧盟新的人工智能法制定指导方针,重点是解释人工智能系统和禁止的做法。澳大利亚工业部长埃德·胡西克(Ed Husic)确认了该国对保护性法规的承诺,尽管唐纳德·特朗普领导的即将上任的美国政府可能会反对。OpenAI 计划在华盛顿提出雄心勃勃的国家基础设施蓝图,旨在通过提议专门经济区、扩大能源能力和组建北美人工智能联盟来确保美国在人工智能竞赛中的竞争力。

威斯康星州劳动力活动向商界领袖传授人工智能知识 - Fox21Online

作为威斯康星州劳动力制胜系列活动的一部分,威斯康星州商界领袖齐聚苏必利尔举行的论坛上讨论人工智能 (AI)。该活动由威斯康星州西北部劳动力投资委员会和威斯康星州劳动力发展部主办,旨在介绍人工智能工具并展示在各行业实施这些工具的安全方法。与会者分享了经验并了解了如何在工作场所使用人工智能。